LanderPi: Autonomous Search-and-Rescue

This project was completed as part of a Robotics Control & Automation module during my MSc at Loughborough University.

I designed and implemented a ROS 2 software package for a search-and-rescue scenario using the LanderPi robotics platform.

I integrated perception, navigation, obstacle avoidance, and human–robot interaction into an end-to-end autonomous solution.

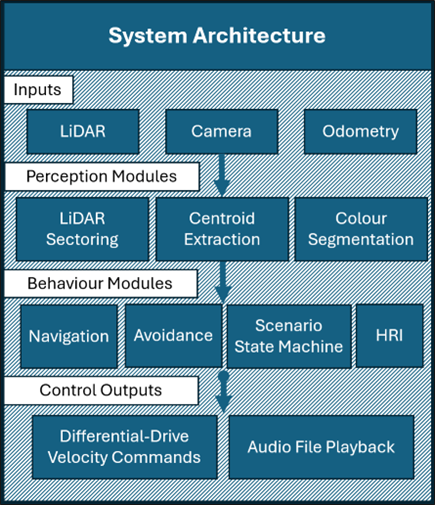

System Architecture

I delivered the project through a modular ROS 2 architecture. Each behaviour module interpreted sensor topics and generated motion commands using differential-drive kinematics.

The modular structure enabled me to make the most of limited on-robot test time; I could quickly replace modules to test specific functionality. I also used ROS 2 parameters to make on-the-fly adjustments.

I designed the software to be hardware-agnostic, requiring only minimal changes to run on an alternative platform.

Vision-Based Navigation

I implemented vision-based navigation throughout the project: a simple line-following program simulated a pre-marked route, and the robot then automatically transitioned to a more advanced beacon-navigation program when it reached the end of the line.

This emulated an IR beacon being dropped in the search area. The software used HSV colour segmentation to detect a green cylinder beacon analogue.

Centroid tracking located the beacon, and I mapped the heading error to angular velocity using PID-based visual servoing.

Reactive Obstacle Avoidance

I integrated reactive obstacle avoidance into the beacon-navigation program using the LanderPi’s LiDAR. When LiDAR range fell below the safety threshold, obstacle avoidance was triggered.

The program then set a flag to gate navigation before yaw-scanning in place, using LiDAR data to select the freer side (left/right sector comparison) and navigate towards it. I used hysteresis to prevent the oscillation observed during testing.

This reactive avoidance strategy with scan-and-select logic enabled fully autonomous navigation through real-time decision making. SLAM was out of scope for the project timeframe.

Human–Robot Interaction

I achieved human–robot interaction through a vision-based gesture recognition interface (core gesture classifier by Fred).

I implemented the following enhancements:

- To prevent false detections, I added a hold-time debounce (~200 ms continuous detection) before confirming a gesture.

- On detection, the robot triggered a response behaviour (wave) and an audio prompt.

- I added a search/reacquisition behaviour: a pan-tilt scan at two elevations, followed by a base yaw-scan to broaden the search area.

Behaviour Control & Autonomy

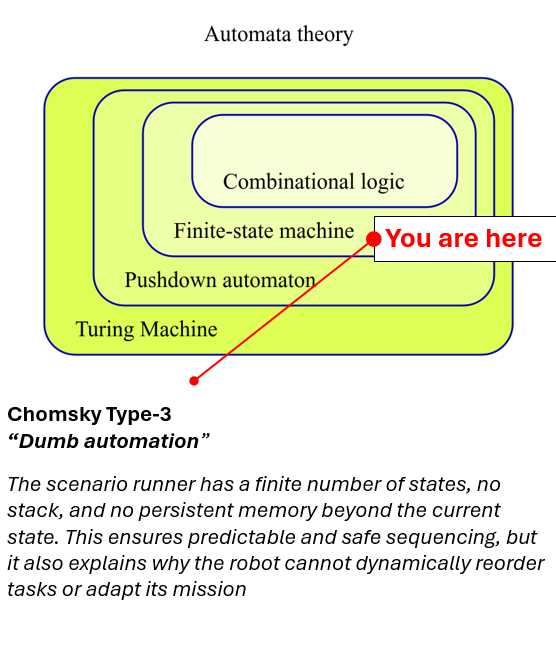

I implemented a finite-state controller to orchestrate the mission and achieve deterministic autonomy.

This module handled behaviour arbitration and conflict prevention and included timeouts and fail-safe handling in the event of a stall condition.

Engineering Constraints & Optimisation

Key constraints included compute budget and limited on-robot testing; I mitigated these by rate-limiting telemetry/logging, keeping perception lightweight, and exposing thresholds and gains as ROS 2 parameters for rapid on-robot tuning.

Outcome

The project delivered reliable autonomous navigation and interaction.

In lab trials, the robot completed the test route, acquired the beacon, negotiated obstacles, and responded to gestures.

I was awarded a first-class grade.

View the source code on GitHub.